By Peter Wotherspoon, Biochemical Society

Okay, so we all know how this song and dance goes; it’s been in enough movies by this point:

- Humanity makes an intelligent machine

- The machine becomes self-aware

- Humanity doesn’t like the poor machine being self-aware and tries to turn it off

- The machine doesn’t like being turned off

- Robot death squads and global thermonuclear war

Here we have (under slightly more violent circumstances than would be ideal) what is generally thought of as the technological singularity. The idea that the creation of artificial super-intelligence will lead to an unstoppable cascade of technological advancements because no doubt a computer intelligence smarter than us can in turn make another computer intelligence smarter than it and so on. So, with all the hype around AI recently, have we reached the tipping point?

The short answer is probably not yet. “But how can that possibly be?” I hear you ask, when we’ve already seen the creation of AIs capable of outperforming humans in tasks we once thought too computationally time-intensive for them to handle:

- Google has produced AlphaGo an AI capable of beating the world’s top Go players in a game with so many board states (2.08 x 10170) that it requires intuition and experience to be good rather than simply raw processing power.

- Elon Musk’s OpenAI has bested a professional human player in DOTA, a video game which is a far departure from the usual test benches for AI frameworks, like chess.

- In fact, Google’s AutoML AI was responsible for the creation of a daughter AI, NASNet, which outperforms anything we have made ourselves in the field of computer vision and object recognition.

So, if AI is already making AI, then where is Skynet and the T-100s?

The advent of “Neural Nets” is upon us… and has been for some time

Well as it happens, these AIs exist thanks to, among other things, significant advancements in the field of machine learning. Machine learning, being a paradigm in AI design that uses statistical techniques to allow an AI to iteratively improve at a task it is being taught. Machine learning has been around for a while. It corrects your texts, sorts your spam and recognizes your voice (‘Hey Siri’). The recent spotlight on machine learning and AI though is, as I’m sure you’ve heard, solidly fixed on ‘neural networks’, termed as such because they were vaguely inspired by the biological system of the same name (bonus points if you can guess which one).

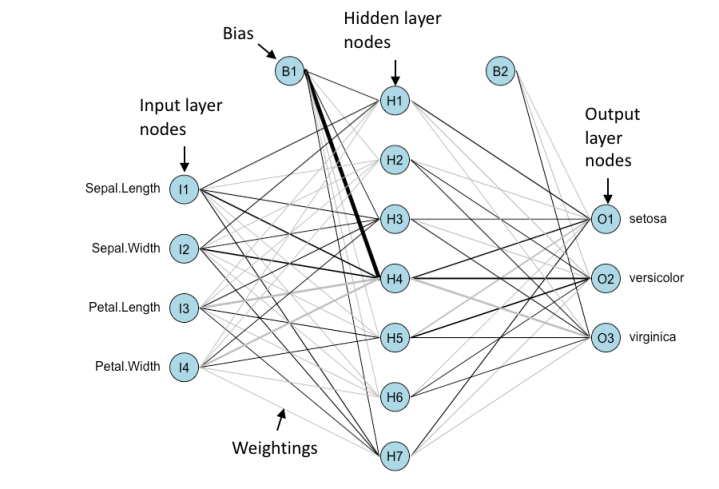

Essentially an artificial neural network (ANN) is a set of input, hidden and output nodes wherein numeric values are passed from the input nodes through the hidden nodes to the output nodes. As the values are passed between nodes, some mathematical operations are conducted on them and the value at the end is the output. Training the network basically boils down to tuning the mathematical operations such that the output at the end conforms to the output you would expect as determined by your training data. But even these AI models have existed for a while in some capacity, since the 1960s in fact (MADALINE was created and affectionately named at Stanford University to remove echoes over telephone lines). So then what’s changed?

Example of a neural network showing input nodes for multiple input variables. Each input variable is connected to every node in the hidden layer and each connection has an associated weighting (how much that input affects that node). Each hidden node also has a bias with an associated weighting. The hidden nodes then take the sum of their inputs and pass it to an activation function (just some form of mathematical operation). The output from the activation function are then passed on, in this case to the output layer. The output layer also has its own biases and weightings. Training the network is done by modifying the weightings.

Neural network built in R using nnet and visualized using: https://gist.githubusercontent.com/fawda123/7471137/raw/466c1474d0a505ff044412703516c34f1a4684a5/nnet_plot_update.r

Mainly the advancements have come in the methods and programs used to train neural networks; the acceptance that networks with more hidden nodes are more accurate; and the emergence of programming languages and libraries conducive to handling large data loads and statistical processing. Of course, the improvements to hardware over the years also impact significantly on what we can get computers to do. However, the fact still remains that we are designing and producing narrow AIs. We have no doubt moved closer to being able to produce an artificial general intelligence but as it stands we are still far off.

The knowledge gap is a bit more than a gap

We cannot yet mimic the generalized unsupervised training of biological networks or replicate the complex initial state of neurons which are in place as a result of genetic inheritance and evolution. Furthermore, the complexity regarding nodes in even the largest artificial neural networks still pales in comparison to the complexity of biological networks, which are larger, constantly adjusting due to neural plasticity and have far more sophisticated systems to deal with learning biases.

All in all, we have a long way to go until we reach the technological singularity (probably), but that doesn’t mean we aren’t going to see some transformative improvements coming from the field of AI. So what’s the significance of AI to biologists if we’re not all going to be replaced by super-intelligent robot researchers in the near future? Well, neural network AIs are permeating into pretty much every field in one way or another (I mean seriously, if you search what you do and machine learning you’ll probably find a few examples). So even if we’re not balancing on the fringe of a new age of civilization, it’s probably still a good idea to keep your eye on machine learning in the coming years, maybe even take a step into programming if you feel so inclined. All you need is a computer after all (ominous chuckle…).

About me

I’m in the first year of a MIBTP DTP at the University of Birmingham and currently doing an internship at the Biochemical Society. My PhD is focused on bacterial outer membrane protein biogenesis and structural studies of the β-barrel assembly machinery. As for hobbies, refer to above.

The Biochemical Society will be further exploring the uses of AI and machine learning in our Biology Week Debate (9 October) at the Royal Institution in collaboration with the Royal Society of Biology and the British Pharmacological Society. Find out all the details and get your tickets here.

Interested in learning R? Register for R for Biochemists 101 online training course starting 10 September 2018, developed by the Biochemical Society.

2 thoughts on “Approaching the Singularity; have we crossed the event horizon of Artificial Intelligence (AI)?”